Backstage

Menu

Statting Lineup

Blog Posts

For those that enjoy reading, seeing visuals, and having immediate access to data sources that are used. The frequency of posts varies greatly and merely depends on when I have an idea come to mind and when I have the time to address it. Feel free to contact me with any blog ideas or desires.

|

Tomorrow, (Sunday, December 4th), the results from the Hall of Fame's 2023 Contemporary Era Committee ballot will be announced. The ballot consists of 8 players that made their primary impact after 1980, and you can view the players on the ballot here. With this in mind, I thought I would take a break from my Player Value research to see what my Hall of Fame predictive model thought of these candidates, as well as share my own thoughts and provide the current version of Player Value for each candidate's career. I'll go over some details of my Hall of Fame predictive model and its use on the 2022 ballots first; feel free to skip ahead if you'd just like to see the model's and my thoughts on the 2023 Contemporary Era ballot candidates. Hall of Fame Predictive Model Overview I first introduced my model and used it on the 2022 BBWAA ballot here. I entered the model into the 2021 Fall USCLAP competition during my final semester in college, and it ended up finishing in 2nd place. You can view the winners here, and the official report here. The report dives into the nitty gritty of the model, if you are interested in predictive modeling and learning those fine details. As a quick(ish) summary, the model is intended to only be used on position players that finished their careers after 1957, and that did not use steroids or have some other obvious scandal that is the primary deterrent of the Hall of Fame induction. There are some players, such as Barry Bonds and Pete Rose, that are statistically pretty obvious Hall of Fame inductees, but that are left out because telling the model that someone of their caliber isn't a Hall of Famer would confuse it, since it only considers their performance on the field. While I could have keyed in a "character clause" predictor in the model to handle this, I felt it easier to just exclude the players in question. Gold Gloves and other awards are fairly important predictors of whether a player is in the Hall of Fame, and earlier years (i.e. pre-1957) lacked many of these awards, so the model would unfairly judge these earlier players. Since pitchers are judged on an entirely different basis than position players, it didn't make sense to predict them using the same model, so pitchers are excluded as well. Lastly, Negro League players and stats are not incorporated in the model. While these leagues were basically equally competitive as the Major Leagues at the time (as seen by the dominance of early transition players like Jackie Robinson and Roy Campanella), they played far shorter seasons and have less recorded statistics, so these players' stats and model predictions would be flawed when considering their career statistics. The model uses 5 classes of predictors:

Defensive statistical averages per season, such as putouts per 162 games, were considered but not used due to their lack of predictive power. In fact, most of a player's defensive Hall of Fame value is only encompassed in their Gold Gloves. Only their fielding percentage and range factor per game differences from league average ended up being predictive from the career defensive statistics. Generally, the most important predictors for the various submodels (listed below) ended up being a player's All-Star seasons, career runs scored, career singles, and career RBI. In fact, a simple decision tree model can be run to visualize these important predictors: This simple decision tree model predicts anyone with at least 7 All-Star seasons and 1,208 runs scored as a Hall of Famer, an assertion that's right every time based on the dataset. It also predicts anyone with at least 7 All-Star seasons, less than 1,208 runs scored, but more than 1,239 singles as a Hall of Famer, an assertion that's only right about half the time based on the dataset. Anyone with fewer All-Star seasons, runs scored, or singles is predicted as not a Hall of Famer, an assertion that we can see is nearly always correct. This simple decision tree model is not as accurate as my actual Hall of Fame model, but it's surely better than a coin-flipping approach and is very easy to interpret and helps us visualize that All-Star seasons and runs scored are the preeminent predictors of a player's Hall of Fame fate. Again, this was just an illustrative example - this is NOT my actual Hall of Fame model. My initial model was completed just before the results from the 2022 Golden Days Era Committee ballot were announced. You can check out those candidates here. That ballot consisted of 9 players (7 position players and 2 pitchers) and 1 manager. Jim Kaat, Gil Hodges, Tony Oliva, and Minnie Minoso would all go on to be inducted into the Hall of Fame. The initial model performed really well, with an AUC of .9817. AUC stands for Area Under the Curve, and is basically a measure of model accuracy on a scale of 0 to 1. An AUC of 0.5 represents a random guess, coin flip approach. The higher the AUC, the more accurate the model, and my model's AUC was quite high. The simple decision tree model that I displayed above had an AUC of .8105. We technically care about the test AUC, which is the AUC of the model on the test set, meaning the data/players that the model was not trained or developed on. The training set are the players used to essentially teach the model, and then the test set are the players used to evaluate the model's accuracy. My Hall of Fame predictive model is an ensemble model of 4 different submodels:

With 187 players in my dataset overall, I placed 141 in the training set and 46 in the test set. Of the 46 players in the test set, 17 were Hall of Famers, and thus 29 were not. My model correctly predicted 28 of the 29 non-Hall of Famers, asserting that Dave Parker should be a Hall of Famer (personally, I agree!). It correctly predicted 15 of the 17 Hall of Famers, stating that Lou Brock and Alan Trammel were not up to par. The initial model was designed for predicting future players on each year's BBWAA ballot, not the Era Committee ballots. The Hall of Famers and non-Hall of Famers that the model was trained on were players that already had their BBWAA fates decided, and any candidate on an Era Committee ballot would have been rejected by the BBWAA already. Because of this, simply using the same initial dataset that trained the initial model and then predicting the 2022 Golden Days ballot players is a flawed approach that results in none of the players being predicted as Hall of Famers. For any player such as Ken Boyer that was in the training set, the model will predict them as a non-Hall of Famer since that is exactly what the model was told when training on the data. While it could predict players in the test set still fine, all of the Golden Days candidates in the test set were not predicted as Hall of Famers. The proper approach is to remove the 7 position players - Dick Allen, Ken Boyer, Gil Hodges, Roger Maris, Minne Minoso, Tony Oliva, and Maury Wills - from the data and retrain the model on this adjusted dataset. Doing this worsens the predictive accuracy of the model down to .9477. While this is worse than the initial model's AUC of .9817, it is still great overall. Nonetheless, this reduction in accuracy foreshadows how the model thinks of these players. Removing the information that told the model that these players weren't Hall of Famers made it worse. After applying this adjusted version of the model to the players on the 2022 Golden Days ballot, only Dick Allen was predicted as a Hall of Famer. This isn't too shocking, as Allen's bWAR of 58.7 is larger than those of the players' who ended up getting inducted - Hodges at 43.9, Oliva at 43.0, and Minoso at 53.8. Allen is also 23rd all-time in career OPS+ at 156, tied with Frank Thomas and the most of any player that isn't active, used steroids, banned from baseball, or simply archaic (sorry Pete Browning and Dave Orr). His OPS+ is higher than that of both Hank Aaron and Willie Mays. Outside of Jim Kaat - whose 16 Gold Gloves are the 2nd most all-time by a pitcher (and thus isn't handled by the model) and has 287 wins with 2,461 strikeouts - I personally wasn't too sold on any of the players being inducted on last year's era ballot, but Allen would have been at the top of my consideration. As I wrote about previously, when applying this adjusted version of the model to the players on the 2022 BBWAA ballot, only David Ortiz and Todd Helton were predicted as Hall of Famers. Of course, Ortiz was inducted his first year on the ballot with 77.9% of the vote. Helton received 52% of the vote in his 4th year on the ballot, an increase from his 44.9% received in 2021. When applying the Hall of Fame predictive model to the 2023 Contemporary Era ballot, there are 2 approaches we can take:

Albert Belle, OF Years: 1989-2000 Teams: Cleveland Indians, Chicago White Sox, Baltimore Orioles Accolades: 5x All Star, 5x Silver Slugger, 3x RBI Leader, 2x SLG Leader, 1x Runs, Doubles, HR Leader Key Stats: 381 HR, 1,239 RBI, 389 Doubles, 1,539 G, 6,676 PA, .933 OPS, 144 OPS+, 3,300 TB Player Value: 312.15 Total, 244.60 Batting Value, -2.52 Baserunning Value, 70.05 Fielding Value Photo courtesy of WKBN 27 Model Talk: The initial combined ensemble model gives Belle a Hall of Fame probability of .2379, which rounds down to 0 and thus predicts him as not a Hall of Famer. The FDA submodel is particularly unimpressed with Belle, giving him a probability of just .0008. The GLM model isn't too fond of Belle either, giving him a probability of .2145. The model averaged neural network holds a similar stance, with a probability of .1667. However, the SVM model does think Belle should be a Hall of Famer, giving him a probability of .5695. While the final combined ensemble model's AUC was .9664, the GLM and FDA submodels were actually more accurate after the training set updates, with AUCs of .9800 and .9811, respectively. The SVM and neural network submodels were still worse, however, with respective AUCs of .9054 and .9391. Given that the most accurate submodel gives Belle the lowest probability, and the least accurate submodel gives Belle the highest probability, we can conclude that the model doesn't like Belle too much. It did give him a higher combined probability than Hall of Famer Alan Trammel (.1039), however. Since the ensemble model isn't actually the best in this case, and since the 4 submodels have varying accuracy, another approach for the final probability is to compute a weighted average based off of the accuracy of each submodel, rather than using a simple average. That is to say, weight the GLM and FDA predictions more heavily since they are more accurate, rather than treating them equally as the SVM and neural network predictions. This alternative approach makes the ensemble model's AUC now slightly higher at .9706. This alternative approach has a pretty minimal effect. Each of the submodel probabilities are the same, but Belle's new final ensemble probability is now slightly lower at .2321. Again, this is due to the more accurate submodels giving him lower probabilities of being a Hall of Famer. What if we update the training set with the 2022 Hall of Fame results and then retrain the model? The resulting ensemble model is worse, with a lower AUC of .9286. The updated FDA submodel has an AUC of .8948, the updated GLM submodel has an AUC of .9206, the updated SVM submodel has an AUC of .9246, and the updated neural network submodel has an AUC of .9206. So, the FDA and GLM submodels got worse, as did the neural network submodel and the ensemble model overall, but the SVM submodel actually became more accurate with the 2022 Hall of Fame results. This updated simple average ensemble model gives Belle a probability of .2430, slightly higher than without the updates but still not enough to be predicted as a Hall of Famer. The FDA submodel probability is .0022, the GLM submodel probability is .2115, the SVM submodel probability is .4680, and the neural network submodel probability is .2904. Lastly, if we use the weighted average ensemble model with the training dataset that includes the 2022 Hall of Fame results, Belle's new ensemble probability is slightly higher at .2450. Still not high enough to be predicted as a Hall of Famer. In this case, the now less accurate FDA submodel is weighted less while the now more accurate SVM model is weighted more. The FDA submodel liked Belle the least, and the SVM submodel liked him the most, so the increase here makes sense. Interestingly enough, in this case the weighted average ensemble model is just as accurate as the simple average ensemble model, as both had an AUC of .9286. Predictive Model Verdict: Not a Hall of Famer My Thoughts: Maybe you don't think Belle's career totals are that impressive, and maybe you're wondering why I included his career games played and career plate appearances under his "Key Stats". The answer is context. Belle played in just 12 seasons, including his first 2 seasons when he played in just 71 games combined. So out of 10 real full seasons, he was an All-Star half of the time and a Silver Slugger half of the time. He also finished in the top 10 in MVP voting for 5 of those seasons, and in my opinion was robbed of the 1995 MVP by Mo Vaughn, who he bested in basically every offensive category (you can see for yourself here). He hit 30+ HR in 8 of those seasons, and hit 28 and 23 in the other two. He had 100+ RBI in 9 of those seasons, and recorded 95 in the other one. He hit 30+ doubles in 9 of those seasons, and hit 23 in the other one. Consistently 30 doubles, 30 homers, and 100 RBI per season? I'll take that. In terms of the 255 players used in my predictive model dataset, Belle ranks 6th in doubles per 162-game season with 40.9, behind 4 Hall of Famers (Medwick, Greenberg, Hafey, Herman) and Nomar Garciaparra. He ranks 7th in RBI per 162-game season with 130.4, behind 6 Hall of Famers (Gehrig, Greenberg, DiMaggio, Ruth, Foxx, Simmons). He ranks 3rd in HR per 162-game season with 40.1, behind 2 Hall of Famers (Ruth and Kiner). Looking at the LF JAWS leaderboard, he ranks 10th in MVP shares behind Barry Bonds, Pete Rose, Manny Ramirez, and 6 Hall of Famers. I will note that WAR doesn't like Belle's peak quite as much, as his 7 year peak WAR of 36.0 ranks just 29th all-time among left fielders (but ahead of him are 16 HoFers, Rose, and Bonds). That is all to say that Belle had a tremendous peak. But it wasn't just that he was great for a decade and then slowly panned out; his career was abruptly cut short at the age 33 due to a hip injury. I can't emphasize this enough, as I feel it is frequently overlooked when discussing Belle's case. Kirby Puckett and Roy Campanella had career-ending injuries at 35 and are in the Hall of Fame. Ralph Kiner had a career-ending injury at 32 and is in the Hall of Fame. Heck, even Ross Youngs was done by 29 due to illness and is somehow in the Hall of Fame. So, why not Belle? When a player's great career is suddenly cut short, I prefer to give him the benefit of the doubt. Belle's total Player Value of 312.15, under the current version, ranks 66th out of the 4,737 position players since 1974, which puts him in the top 1.4%. His Batting Value of 244.60 ranks him 59th, which is the top 1.25%. Clearly Player Value thinks Belle's peak was sufficiently great! My Opinion: Put Him In Don Mattingly, 1B Years: 1982-1995 Teams: New York Yankees Accolades: 9x Gold Glove, 6x All Star, 1x MVP, 3x Silver Slugger, 3x Double Leader, 2x Hit Leader Key Stats: 2,153 H, .307 BA, 442 Doubles, 222 HR, 1,099 RBI, 7,722 PA, 1,785 G Player Value: 205.42 Total, 66.50 Batting Value, -0.33 Baserunning Value, 139.25 Fielding Value Photo courtesy of NBC Sports. Model Talk: The initial ensemble model gives Mattingly a combined probability of .2787, rounding down to a non Hall of Famer prediction. The FDA model has him at just .0331, while the GLM, SVM, and neural network models are slightly more positive, giving him respective probabilities of .3066, .4377, and .3375. If we use the approach where we weight the submodels based off of their accuracy, Mattingly's new ensemble probability becomes .2749, even worse than before. Again, the GLM and FDA submodels were the most accurate (highest AUCs) and they gave Mattingly the lowest Hall of Fame probabilities. If we use the model that was retrained on the training data that includes the 2022 Hall of Fame voting results, the simple average ensemble model gives Mattingly a notably higher probability of .4351, but this is still too low to be predicted as a Hall of Famer. The FDA submodel gives him a probability of just .0076, the GLM submodel gives him a probability of .4479, the SVM submodel gives him a high porbability of .7002, and the neural network submodel gives him a solid probability of .5849. If not for the FDA submodel's tiny probability (which now has the lowest AUC and is thus the least accurate after the 2022 updates), then Mattingly might have been predicted as a Hall of Famer by the simple average ensemble model. Lastly, if we use the weighted average ensemble model with the training dataset that includes the 2022 Hall of Fame results, Mattingly's new ensemble probability is slightly higher at .4384. Still not high enough to be predicted as a Hall of Famer. In this case, the now less accurate FDA submodel is weighted less while the now more accurate SVM model is weighted more. The FDA submodel liked Mattingly the least, and the SVM submodel liked him the most, so the increase here makes sense. Predictive Model Verdict: Not a Hall of Famer My Thoughts: Mattingly also retired early at age 34, but due to a more gradual deterioration via back injuries rather than due to a sudden career-ending injury. His 9 Gold Gloves are the 2nd most by a first baseman in history, behind only Keith Hernandez (who I also think should be inducted and been included on this ballot). Besides these two, every other players with at least 9 Gold Gloves is in the Hall or is still having their fate decided. I think the many Gold Gloves are what drives Mattingly's case for me, while still not being bad offensively. Despite a shorter career he still amassed at least 2,000 hits and 1,000 RBI, won a batting title, led the league in OPS in 1986 when he finished 2nd in MVP voting, and led the league in doubles 3 times. Only 9 players that spent at least 50% of their time at first had a higher career batting average than Mattingly's .307 with as many plate appearances. Of those 9, 7 are in the Hall of Fame, Todd Helton is still awaiting his fate (I think he should be in), and the last is Stuffy McGinnis, whose .307 career batting average is contextually not as impressive given that he played in an earlier era where higher batting averages were more common. Mattingly may not have been a powerhouse offensively, and WAR may disagree with his defensive ability, but he won 9 Gold Gloves nonetheless and like Dale Murphy below is another player that can bolster the lackluster Hall of Fame membership of players from the '80s. Mattingly's total Player Value of 205.42, under the current version, ranks 155th out of the 4,737 position players since 1974, which puts him in the top 3.27%. At least under the current iteration, this suggests that Don is more 'Hall of Great' territory. His Fielding Value of 139.25 ranks him 127th, which is the top 2.68%. My Opinion: Put Him In Fred McGriff, 1B Years: 1986-2004 Teams: Toronto Blue Jays, Atlanta Braves, Tampa Bay Devil Rays, San Diego Padres, Chicago Cubs, Los Angeles Dodgers Accolades: 5x All Star, 3x Silver Slugger, 2x HR Leader Key Stats: 493 HR, 2,490 H, 1,550 RBI, 1,305 walks, 1,349 R Player Value: 247.50 Total, 180.52 Batting Value, -0.57 Baserunning Value, 67.55 Fielding Value Photo courtesy of Sports Illustrated. Model Talk: The initial ensemble model gives McGriff a solid probability of .6322, rounding up to a Hall of Fame prediction. The FDA submodel loves McGriff, giving him a .9019 probability. The GLM submodel thinks otherwise, giving him just a .3492 probability. The SVM and neural network submodels also support his candidacy with probabilities of .5418 and .7357, respectively. If we use the approach where we weight the submodels based off of their accuracy, McGriff's new ensemble probability becomes .6330, slightly higher than before. The FDA submodel was the most accurate (highest AUC) and it gave McGriff the highest Hall of Fame probability, thus the increase. If we use the model that was retrained on the training data that includes the 2022 Hall of Fame voting results, the simple average ensemble model gives McGriff a notably lower probability of .3710, which is now low enough to not be predicted as a Hall of Famer. The FDA submodel gives him a much lower probability of just .0694, the GLM submodel gives him a probability of .4100, the SVM submodel gives him a probability of .5260, and the neural network submodel gives him a probability of .4785. The FDA submodel went from loving McGriff to hating him, and became much less accurate in the process. Lastly, if we use the weighted average ensemble model with the training dataset that includes the 2022 Hall of Fame results, McGriff's new ensemble probability is slightly higher at .3733. Still not high enough to be predicted as a Hall of Famer. In this case, the now less accurate FDA submodel is weighted less while the now more accurate SVM model is weighted more. The FDA submodel liked McGriff the least, and the SVM submodel liked him the most, so the increase here makes sense. Predictive Model Verdict: Hall of Famer, ignoring last year's results My Thoughts: McGriff may not have won an MVP, but he finished in the top 10 in voting 6 times. The only first basemen with more top 10 MVP finishes are 5 HoFers (Gehrig, Thomas, Murray, Killebrew, Ortiz), 2 future HoFers (Pujols, Cabrera) and Freddie Freeman. Tied with McGriff with 6 top 10 MVP finishes are 3 HoFers (Mize, Terry, Bagwell), 2 active players that I think are likely future HoFers (Votto, Goldschmidt), Ryan Howard, and Andres Gallaraga. McGriff's 493 home runs are tied with Lou Gehrig for the 12th most by a first basemen and the 29th most across all positions. The 11 first basemen with more homers are 7 Hall of Famers, 2 future Hall of Famers (Pujols, Cabrera) and 2 notable steroid users (McGwire, Palmeiro). Of the 28 players with more homers, every single one is either in the Hall of Fame, used steroids, still active, or not yet eligible for the Hall of Fame. Fred McGriff has the most home runs of any "clean" player that has been thus far rejected for the Hall of Fame. I personally think reaching 500+ home runs should automatically qualify a player for the Hall, granted that they didn't use steroids, and historical voting seems to reflect this rule. The fact that McGriff has been excluded due to 7 homers is absurd, especially when we consider the 1994 strike-shortened season. In 1994 the Braves (like all other MLB teams) played a shortened schedule of 114 games, of which McGriff played in 113. In those 113 games, McGriff hit 34 home runs, good for a pace of about .3 homers per game. Across a normal full 162 game season, that's 48.6 homers. McGriff played in 113 out of 114, or 99.12% of his team's games. That brings the hypothetical full season total down to 48.17, which we'll round down to 48. That's 14 more HR than his actual 34 in 1994. With this short hand math, we estimate McGriff would have had 507 career home runs, if not for the 1994 strike. McGriff's 1,550 RBI rank 47th most all-time. You can take a look at this list and see that all of the players ahead of him are either in the Hall, used steroids, or haven't been on a ballot yet (Beltre and Beltran). He ranks 15th in RBI among first basemen, with the usual HoF/steroid/not yet eligible suspects ahead of him. His 2,490 career hits also rank 15th among first basemen. He hit 30+ home runs in 10 seasons, a feat achieved by just 21 players. Besides Carlos Delgado, every other player that has done this is either in the Hall, will be in the Hall, or used steroids. The advanced metrics don't like McGriff as much. His WAR of 52.6 ranks 30th all-time among first basemen, below the Hall of Fame positional average of 65.5 (which would rank 14th). His JAWS of 44.3 ranks 31st all-time among his position, also below HoF average. But should the clean guy with the most HR and RBI not in the Hall be excluded, especially if he would have reached the essentially automatic qualifier of 500 HR if not for a strike? I think not. McGriff's total Player Value of 247.50, under the current version, ranks 119th out of the 4,737 position players since 1974, which puts him in the top 2.5%. His Batting Value of 180.52 ranks him 94th, which is the top 1.98%. My Opinion: Put Him In! Dale Murphy, OF Years: 1976-1993 Teams: Atlanta Braves, Philadelphia Phillies, Colorado Rockies Accolades: 7x All Star, 2x MVP, 5x Gold Glove, 4x Silver Slugger, 2x HR and RBI Leader Key Stats: 398 HR, 2,111 H, 1,266 RBI, 350 doubles Player Value: 166.45 Total, 220.28 Batting Value, 1.48 Baserunning Value, -55.31 Fielding Value Photo courtesy of Baseball Egg. Model Talk: The initial ensemble model gives Murphy a probability of .5079, barely rounding up to a Hall of Fame prediction. The FDA submodel isn't too high on Murphy, giving him a probability of .2701. The GLM submodel is slightly more favorable at a .3986 probability. The SVM submodel is a virtual toss-up with a .4943 probability. The neural network submodel is a big fan of Murphy, giving him a .8687 probability. If we use the approach where we weight the submodels based off of their accuracy, Murphy's new ensemble probability becomes .5043, slightly lower than before. The SVM and neural network submodels were the least accurate (lowest AUCs) and they gave Murphy the highest Hall of Fame probabilities, thus the decrease. However, these submodels were still accurate enough and still gave Murphy high enough probabilities to still merit a Hall of Fame prediction overall. If we use the model that was retrained on the training data that includes the 2022 Hall of Fame voting results, the simple average ensemble model gives Murphy a slightly higher probability of .5125, which is high enough to be predicted as a Hall of Famer. The FDA submodel gives him a probability of .1462, the GLM submodel gives him a probability of .5207, the SVM submodel gives him a high probability of .7555, and the neural network submodel gives him a solid probability of .6274. Lastly, if we use the weighted average ensemble model with the training dataset that includes the 2022 Hall of Fame results, Murphy's new ensemble probability is slightly higher at .5153. Still not high enough to be predicted as a Hall of Famer. In this case, the now less accurate FDA submodel is weighted less while the now more accurate SVM model is weighted more. The FDA submodel liked Murphy the least, and the SVM submodel liked him the most, so the increase here makes sense. Predictive Model Verdict: Hall of Famer, regardless of last year's results My Thoughts: Murphy has the accolades worthy of a Hall of Famer, but his cumulative career totals are somewhat lacking. We can't make any type of career hits, homers, or RBI arguments for Murphy like we can with McGriff. But he did win 2 MVPs, which only 5 center fielders have done in history. The other 4 are future HoFer Mike Trout and 3 HoFers (Mantle, Dimaggio, Mays). Not a bad crowd. WAR does disagree with his winning of these MVPs, however, favoring Gary Carter instead in 1982 and John Denny or Dickie Thon in 1983. There have been a total of 32 players that have won multiple MVPs in history, and just 11 have won at least 3 with only Barry Bonds winning more than 3. Of the 31 other dudes, 23 are in the Hall of Fame, 3 used steroids (Bonds, A-Rod, Juan Gonzalez), and 3 are future Hall of Famers (Pujols, Cabrera, Trout). The remaining 2 are Bryce Harper - who is still active and likely a future Hall of Famer as well - and Roger Maris. Murphy has nearly 800 more hits, 100 more HR, and 400 more RBI than Maris, as well as 4 more Gold Gloves. Their cases are very similar, but Murphy was able to stay around a few seasons longer than Maris was, and was better defensively (at least in terms of awards; Rfield has Murphy at -34 and Maris at 45). Amongst center fielders, Murhpy's 398 home runs actually track pretty well, ranking him 8th. Ahead of him are 5 HoFers (Mays, Griffery Jr., Mantle, Dawson, Snider) and 2 players whose Hall of Fame fates have yet to be truly decided in Andruw Jones and Carlos Beltran. In general, the 1980s are underrepresented in Cooperstown. Greats like Darryl Strawberry, Dave Stewart, Keith Hernandez, Dwight Gooden, and Dave Stieb have all been excluded. Sports Reference's Adam Darowski shared a split of Hall of Famers by their debut year on Twitter. The 1980s have just 16, compared to 22 from each of the '50s and '60s, and a whopping 46 from the '20s. Murphy was another one of the '80s greats, and inducting him could help begin righting this wrong. Murphy's total Player Value of 166.45, under the current version, ranks 212th out of the 4,737 position players since 1974, which puts him in the top 4.48%, not quite Hall of Fame caliber. His Batting Value of 220.28 ranks him 66th, however, which is the top 1.39%. I certainly think that the offensive side of Player Value is currently more accurate than the defensive side. My Opinion: Put Him In So the model says to put 1 to 2 guys in, and I'd put all 4 in given the chance. What can I say, I'm a "big Hall" guy. The following players weren't predicted by the model since they're pitchers or used steroids, but here are my thoughts on their Hall of Fame cases: Curt Schilling In my hypothetical 2022 ballot, I highly emphasized that I thought Schilling should be in Cooperstown. Straight from that earlier post: "Historically, certain career marks have been guarantees for induction. One such milestone is 3,000 strikeouts, which only 19 pitchers have done in history. Of these, 2 are active players (Max Scherzer and Justin Verlander), 1 is not eligible for the ballot yet (C.C. Sabathia), and 1 used steroids (Roger Clemens). Of the remaining 15 pitchers with 3,000 or more strikeouts, 14 of them are in the Hall of Fame and the other is Curt Schilling. Schilling's 3,116 career K's are good for 15th all-time, more than Hall of Famer John Smoltz's career total in about 200 less innings, and just 1 less than Hall of Famer Bob Gibson's career total in about 600 less innings. Schilling's career WAR of 79.5 is 26th best among starting pitchers and the most of any starting pitcher not in the Hall of Fame, with the exception of Clemens. Schilling also rocks an impressive 6 All-Star game seasons, 3 World Series, and a World Series MVP. While he never won a Cy Young award, he did come in 2nd place three times and in 4th place once. People like to rag on Schilling's character, which is admittedly deplorable, but... [t]he Hall contains the best baseball players in history, and Curt Schilling is clearly one of them and therefore should be inducted." Max Sherzer and Justin Verlander have since passed Schilling in K's to now rank him 17th all-time, but the point still stands. Schilling has no connection to steroids and absolutely should be inducted as one of the game's great pitchers, regardless of how objectively awful of a person he is. The current version of Player Value has Schilling at 188.64, ranking him 29th among the 6,077 pitchers since 1974. His Pitching Value of 237.97 ranks 14th. Barry Bonds My hypothetical 2022 ballot also included Bonds. I'm not going to hash out his case all over, but feel free to click the link above under Schilling to review what I previously stated. The short of it is that I'm generally against steroid users in the Hall of Fame, but make an exception for Bonds who was clearly a Hall of Famer prior to his steroid use and was statistically significantly better than his steroid counterparts. That was my stance for his final year on the BBWAA ballot, which included 394 voters. The 2023 Contemporary Era Committee will consist of just 16 voters. I still think Bonds ought to be in, but I'd rather the first undeniable steroid user that is inducted to be voted in by more people via the BBWAA ballot. Plus, Bonds just had his chance last year on the BBWAA ballot; other worthy and steroid-free candidates on the ballot have had to wait longer for their next chance at the Hall. The current version of Player Value has Bonds at 1,201.08, easily the most of any player since 1974. His Batting Value of 1,015.49 also ranks 1st, while his Fielding Value of 166.82 ranks 77th. Roger Clemens My stance on Clemens is basically exactly what I stated for Bonds above. Statistically, obviously a Hall of Famer, but his steroid use calls him slightly into question. Nontheless, I would have put him on my BBWAA ballot last year. However, I think the larger BBWAA ballot should sort out the steroid users before we let just 16 (or really, only 12) people determine if they should be inducted. The current version of Player Value has Clemens at 555.94, ranking him 2nd among the 6,077 pitchers since 1974. His Pitching Value of 518.04 ranks 1st. Rafael Palmeiro Palmeiro is in the same boat for me as Bonds and Clemens, he just wasn't on the 2022 BBWAA ballot. He is clearly a Hall of Famer when you ignore the steroids, clinching the "automatic" qualifiers of both 500+ home runs and 3000+ hits. Given his steroid use, there are more preferable guys to use for the ballot's limited number of spots. The current version of Player Value has Palmeiro at 329.68, ranking him 61st among players since 1974. His Batting Value of 198.59 ranks 76th. I emphasized the current version of Player Value because it is far from complete, but still, it's not that bad or wrong as is. Most Batting Value since 1974? Barry Bonds. Most Baserunning Value since 1974? Rickey Henderson. Most Fielding Value since 1974? Ozzie Smith. Most Fielding Value among pitchers since 1974? Greg Maddux. Most Pitching Value since 1974? Roger Clemens. My Hypothetical 2023 Contemporary Era Ballot:

The actual 16-person committee members that will vote for this ballot was announced recently, and includes former Braves Hall of Famers Greg Maddux and Chipper Jones, both of whom were teammates with Fred McGriff from 1993 to 1997. Frank Thomas spent 2 years with Alberte Belle on the Chicago White Sox in 1997 and 1998. Lou Whitaker would have been a great candidate to benefit from the committee's makeup given the inclusion of TIgers teammates Jack Morris and Alan Trammel, but alas. Lee Smith and Ryne Sandberg (and Greg Maddux) played some with Rafael Palmeiro on the Cubs in the late '80s before he really burst onto the scene. Needless to say, I think the committee's makeup will certainly benefit McGriff the most. As usual, I'll end with a file dump - My USCLAP paper on my Hall of Fame predictive model:

A PowerPoint presentation I gave on the model:

Dataset of players used:

Datasets for 2022 Golden Days, 2022 BBWAA, and 2023 Contemporary Era ballots:

R files for the initial model and 2022 BBWAA predictions, 2022 Golden Days predictions, and 2023 Contemporary Era predictions:

Adjusted datasets that you'll need to run the 2022 and 2023 era ballot R files above:

And that should be everything you need to dig deeper into or replicate the results. Thank you all for reading! Looking forward to sharing more Player Value findings soon, as well as my model's predictions for the upcoming 2023 BBWAA Hall of Fame ballot. Statting Lineup Newsletter Signup Form:

If you'd like to receive email updates for each new post that I make, sign up for the Statting Lineup newsletter using the link below: https://weebly.us18.list-manage.com/subscribe?u=ab653f474b2ced9091eb248b1&id=3a60f3b85f

0 Comments

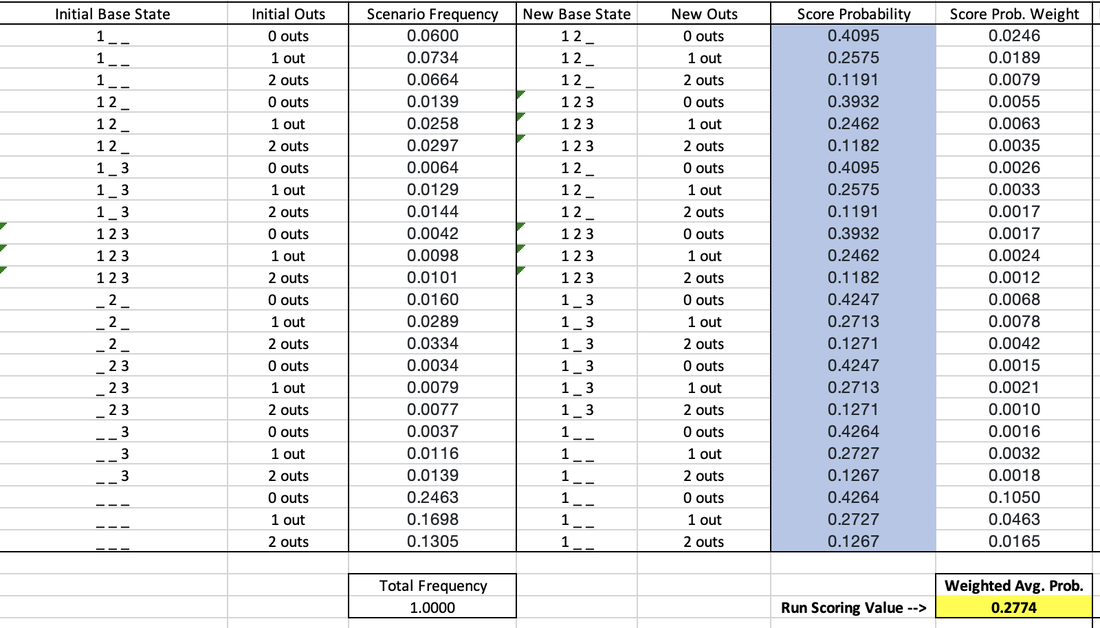

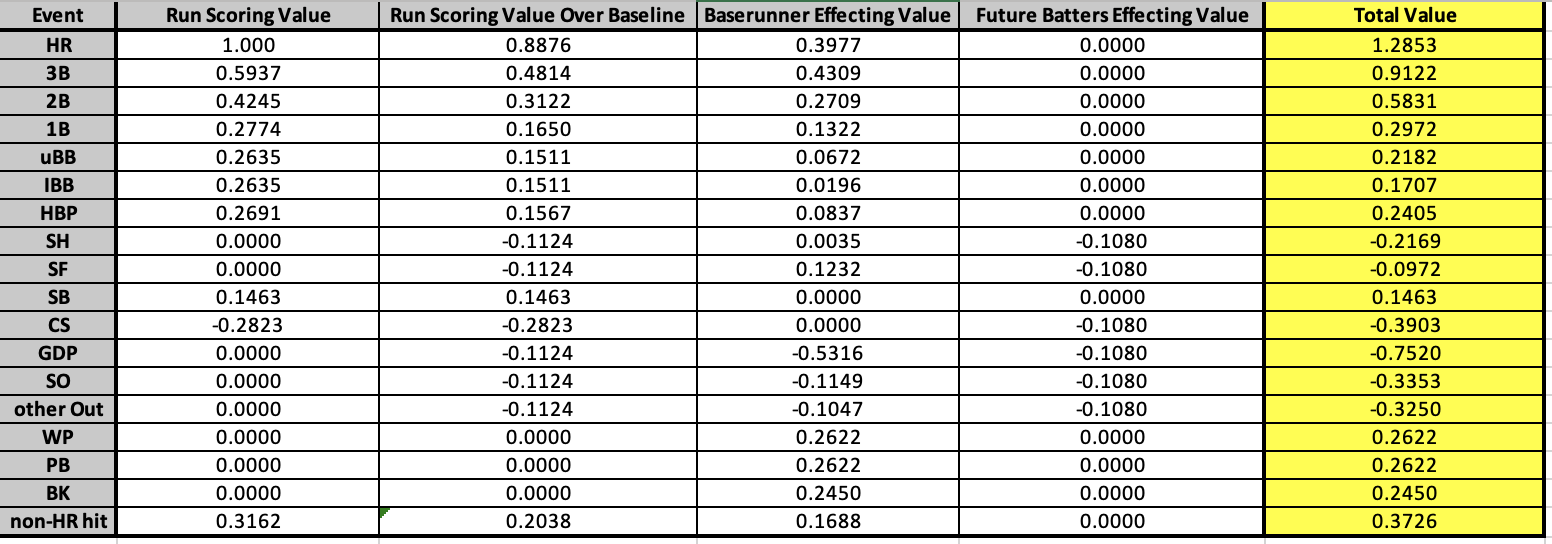

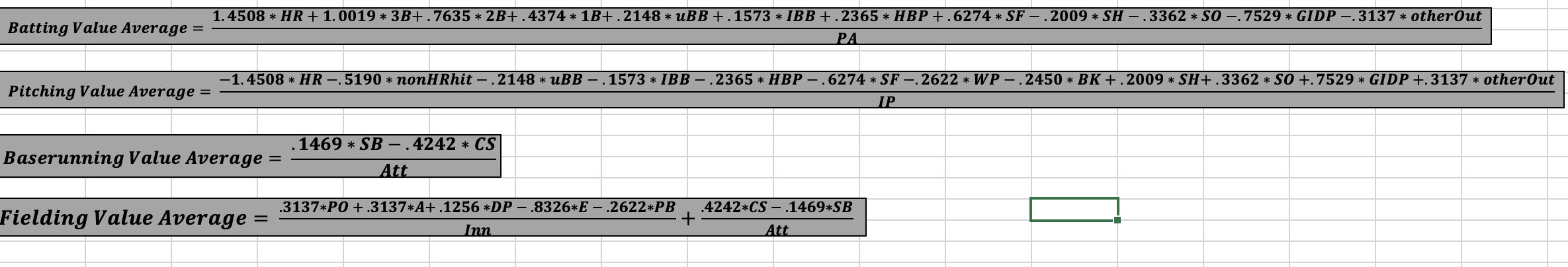

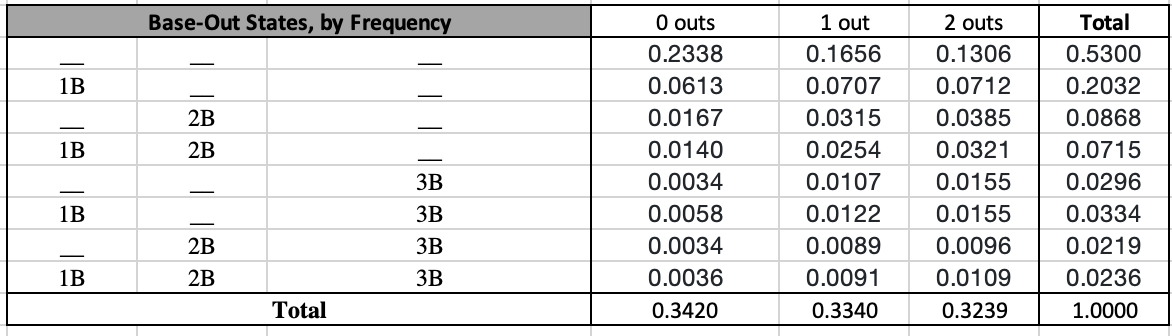

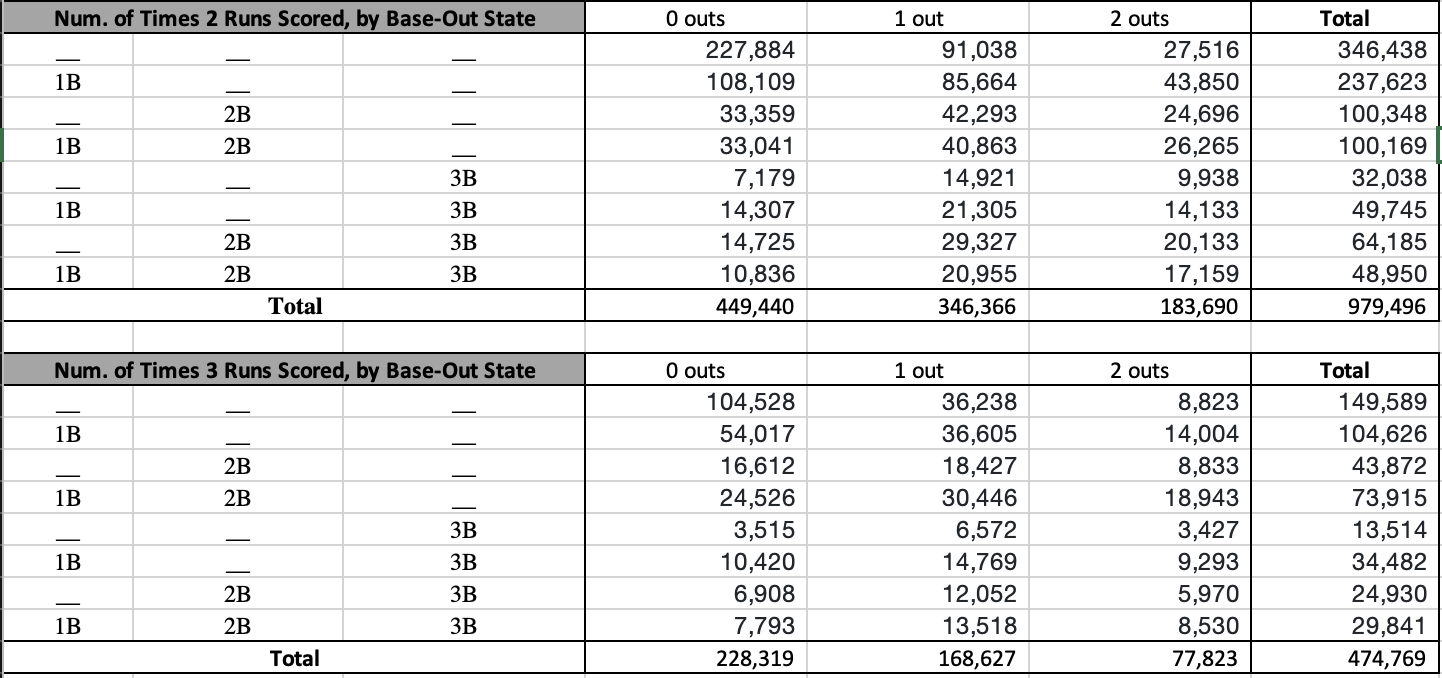

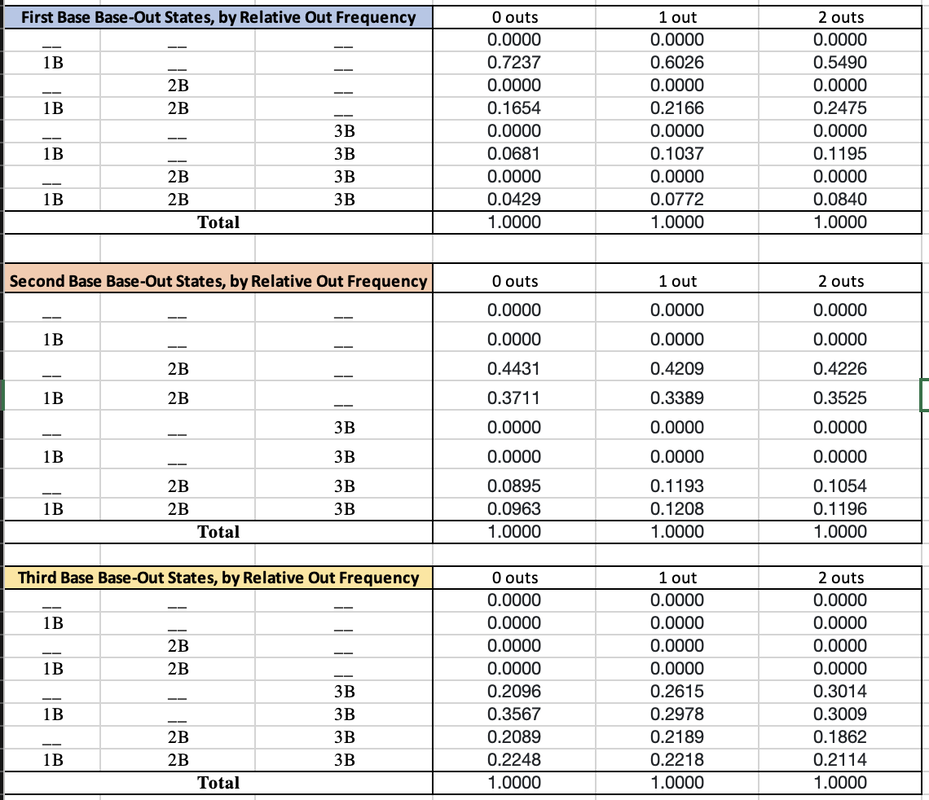

In my last post I introduced my Player Value metric, which seeks to be a simplified version of WAR. The jist is that we determine the run-value of each of the different events in baseball (like hitting a home run, catching a pop fly, and so forth) and then reward or dock players for each time they record those events. We then compare players to their position's first quartile values. This addendum serves to address 3 changes to Player Value since the original post. Like WAR, I intend to continuously update this metric to make it the best that it can be. Unlike WAR, I will always apply the same version of the metric to all players across time. I won't measure defense one way for Honus Wagner and another way for Andrelton Simmons. The first update was pointed out to me after I shared my metric on the r/Sabermetrics sub-Reddit. My previous "Run Driving In Value" was flawed because I was rewarding batters for the entire value of the runs that they drove in. Realistically, those players already had probabilities of scoring. Driving them in should only reward batters for the increase in probability. For example, previously I determined that there are .4057 RBI per double hit, which served as the Run Driving In Value. I was saying that in that respect, a double was worth .4057 runs. However, the probability that say a man on 2nd with 0 outs will score is already 62.11%. If you hit a double and drove the runner in, you shouldn't get credit for the entire run. Rather, you should only be rewarded for the 1-.6211 = 37.89% additional probability. To this end, I have removed the Run Driving In Value from the metric weights and concentrated it all into the Baserunner Effecting Value. I calculate the probability increase of going from 3rd to home on a single the same exact way that I previously calculated the increase of going from 2nd to 3rd on a single. The only change is that I'm not intentionally ignoring the scoring scenarios like I was before, since I'm no longer relying on the Run Driving In portion to cover it. The second update was one improvement that I noted I could make immediately at the bottom of the last post. I previously assumed that all events that put you on the same base gave you the same probability of scoring. This is to say, I assumed that a single and an intentional walk gave you the same probability of scoring, since you end up at first base for both. However, the frequencies with which these events occur leave themselves to be differently conducive at scoring runs. For singles, 24.63% of them occur with nobody on and 0 outs, resulting in you being on first with nobody else on and still 0 outs. This situation gives you a 42.64% shot at scoring. For intentional walks, 29.32% of them occur with a man on 2nd and 2 outs. This puts you at 1st with 2 outs and a man on 2nd after you've been intentionally walked, giving you a probability of scoring of just 11.91%. Clearly, intentional walks take place more commonly in situations where scoring is less likely. The same is true for our other events; base-out state frequencies play a role in our events' probabilities of scoring. Because of this, I have adjusted the Run Scoring Value for all of the events. The Run Scoring Value is now the weighted average probability of scoring of each scenario. You can see a calculation example with singles below: I already mentioned the nobody on, nobody out example above. For another example, about 6% of singles take place with a man on 1st out and 0 outs. When the single is hit, we assume all baserunners will advance as many bases as the batter does (in this case, one). That makes the new situation men on 1st and 2nd with still 0 outs. For the guy on first, this gives him a 40.95% chance of scoring, which is multiplied by the likelihood that scenario occurs (6%) to get the weight of .0246. Adding these up across all possible scenarios, we get the weighted average probability of scoring after hitting a single of 27.74%. The same process was applied to all events. Since I changed the probability of scoring for each event, this also impacted the Future Batters Effecting Value for each event, but the calculation for that piece remained the exact same. The new baseline probability of scoring when you walk up to the plate is 11.24%, and the new Future Batters Effecting Value for outs is -.1080. So I've updated the Run Scoring Value for each event, which impacted the baseline probability of scoring. Thus each event has a new Run Scoring Value Over Baseline. I essentially merged the Run Driving In Value with the Baserunners Effecting Value, and changed the method by which I calculated the Run Driving In Value piece. Lastly, the change in the baseline probability of scoring also impacted the Future Batters Effecting Value for the different events. The third update that I did was also thanks to the r/Sabermetrics sub-Reddit. I was previously weighting the value of an error as the difference between a "non-HR hit" and an "other Out". Realistically, it is unlikely that an error would result in a batter reaching 3rd base solely due to the error. An error will likely put a guy on 1st, and maybe on 2nd if an outfielder drops a flyball or something. To account for this, I calculated a "1B or 2B hit" value that is the weighted average value of a single and double. I now calculate the error as the difference between a "1B or 2B hit" and an "other out". This makes the new value of an error -.6797. You can see the result of these 3 updates in the new run-value weights for each event below: Some events like wild pitches, balks, and passed balls were unaffected by these updates. Most events were changed slightly, but not drastically. The event that had its value change the most was the sac fly, from .6274 to -.0972. This is because I was previously rewarding the batter an entire run for driving the guy on 3rd in. However, the baserunner on 3rd has already done the bulk of the work for that run. Moreover, since there are only 0 outs or 1 out, the guy on 3rd already has a high probability of scoring, even for "being on third base" standards. Bringing him in via sac fly only marginally increases the probability, which gets outnumbered by the detrimental value of the additional out on any remaining baserunners or future batters. The overall effect of these updates is a more accurate measure that intuitively makes more sense. However, there is also an effect on the its ability to describe runs scored and runs allowed per game. Batting Value Average previously had a correlation with runs scored per game of .9341, with an R^2 of .8726. After these updates, it now has a correlation with runs scored per game of .9463 and an R^2 of .8956. So, we improved! When we previously added Baserunning Value Average into the mix, we got an adjusted R^2 of .9071 when running a multiple linear regression to predict runs scored per game. After the updates, we now have an adjusted R^2 of .9238. Again, on the offensive side we see improvement with these updates. Pitching Value Average previously had a correlation with runs allowed per game of -.9217, with an R^2 of .8495. After these updates, it now has a correlation with runs allowed per game of -.9199 and an R^2 of .8462. So, we got a little worse on the pitching side. When we previously added Fielding Value Average into the mix, we got an adjusted R^2 of .8757 when running a multiple linear regression to predict runs allowed per game. After the updates, we now have an adjusted R^2 of .861. Thus overall on the defensive side we lost some performance with these updates. Offensively, we went from describing 90.71% of runs scored per game to 92.38%, a gain of 1.67%. Defensively, we went from describing 87.57% of runs allowed per game to 86.1%, a loss of 1.47%. Thus overall, we increased the descriptive ability of the Player Value metric by 0.2%. This increase is pretty marginal on the measurement side, but again remember that correlation isn't everything; OPS isn't better than wOBA. Having a process that intuitively and mathematically makes more sense is more important, and that is what was really improved through these updates. Below is an updated comparison of my metric's weights with those of other metrics: Thank you for reading this addendum to Player Value. I look forward to applying this to players in the 2010 season and sharing the results! As always, let me know any thoughts you may have in the comments below. See below for updated versions of the files that I shared in the initial Player Value post:

Statting Lineup Newsletter Signup Form:

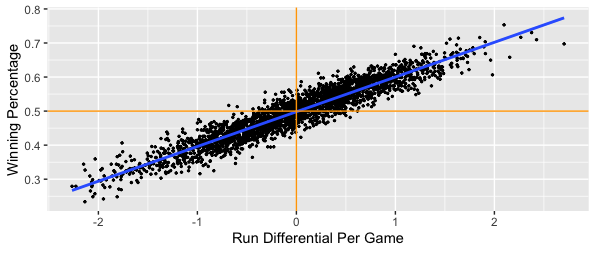

If you'd like to receive email updates for each new post that I make, sign up for the Statting Lineup newsletter using the link below: https://weebly.us18.list-manage.com/subscribe?u=ab653f474b2ced9091eb248b1&id=3a60f3b85f In my previous post, I wrote out a long and detailed explanation of what WAR (Wins Above Replacement) is, how it is calculated, and what my stances were on different pieces of that calculation. In short, I believe that WAR is too commonly used and relied upon for how complex and nontransparent the calculation is. I also believe that some of the decisions made in even the transparent parts of the calculation are questionable, such as relying on FIP (Fielding Independent Pitching) for a key portion of pitcher WAR. Because of this, for the past several months I have been working on a new cumulative metric for player value. This post will be dedicated to describing the formulation of this metric, at both a high-level (for you non-mathy baseball fans) and in greater detail (for my fellow math nerds). The overall goal of this metric is to serve as a substitute for WAR. My belief is that this metric is more transparent and more easily calculated than WAR. Furthermore, I believe this metric is more reasonably used to compare players across different periods of the game. While WAR in all its complexities may be better at describing the best players currently, it is flawed in that it changes how it measures players over time. My metric uses the same basic, recordable information to assess players over all of baseball history. **Please note that portions of Player Value have been updated. Refers to this addendum for details** Overview The primary inspiration for the metric was wOBA, or Weighted On Base Average. If you'll recall from my previous post about WAR, I described wOBA in detail there. To summarize, wOBA is a superior offensive rate statistic than its traditional rivals of batting average, on-base percentage, slugging percentage, and even OPS (on base plus slugging). Batting average incorrectly ignores walks and assumes that all hits are equal. Obviously, walks have offensive value and home runs are better than singles. On-base percentage improves by accounting for the value of walks, but still incorrectly assumes that all hits are equal. Slugging percentage acknowledges the superiority of different types of hits, but messes these weights up. A home run isn't 4 times better than a single, and a double isn't 2 times as good as a single. Furthermore, slugging percentage also regresses from on base percentage's improvement by going back to ignoring walks. OPS combines on base and slugging and thus gives value to walks and treats hits differently. This makes OPS the best yet, but it still has the weights of our events wrong. Then there is wOBA, which more accurately uses the actual run-values of the different types of events. The offensive side of my metric also relies on the run-values for determining these weights, but I took a different approach than Tom Tango in calculating them. Recall from my previous post that wOBA relies on the average changes in run expectancy to determine the run value of events. If that sounds confusing to you and you'd like more details, feel free to look into the 'Details' section below, or view the wOBA portion of my previous blog post. Before I summarize how I determined the run-value of each event, you may be wondering why do we rely on runs to determine player value? That is because runs are the fundamental measurement and currency of baseball. The ultimate goal for a team is to win the most games, and within any game the team with the most runs wins. This means teams should try to maximize their runs scored each game, and minimize their runs allowed each game. Truly, the difference between a team's runs scored per game and its runs allowed per game is very indicative of it's ability to win games: We clearly see that in general, teams that score more runs per game than they allow will have higher winning percentages. Specifically, a team's run differential per game has a correlation of 0.945 with its winning percentage. That is very close to a perfectly positive relationship, which would mean that run differential per game and winning percentage are directly linearly related. If I run a simple linear regression and use run differential per game to predict winning percentage, I get an R^2 value of 0.8931. This mean's that run differential per game accounts for 89.31% of the variability in winning percentage. If you don't know much about linear regression, don't worry; just take this as that run differential per game can explain about 90% of a team's ability to win. Most of the remainder is likely just due to the fact that a team can have individual games where they greatly outscore their opponents (or get outscored by their opponents) that throw the run differential per game off. The key is to have your runs scored per game be consistently greater than your runs allowed per game; if this is always the case, you'll never lose! Another important caveat is understanding player opportunity. At a team level, we care about runs scored and runs allowed. At a player level, we need to understand the strong bias of relying solely on runs scored and runs allowed for measuring value. Players that score more runs or drive more runs in (RBI) will still generally be better, but these values can be skewed. You can be a much better player and still score fewer runs and have less RBI. If your team has worse hitters that can't drive you in as well, you'll probably score less runs than an equivalent player on a better team. Likewise, if your teammates hardly ever get on base for you to drive them in, you'll probably have less RBI than an equivalent player on a better team. The same is true for pitchers; pitchers on a bad defensive team will probably allow more runs and earned runs. Earned runs only account for errors, and there's more to fielding than avoiding errors. To conclude, I could just say that the best batter is the one with the most runs scored per game and RBI per game, and that the best pitcher is the one with the lowest runs allowed per game or earned runs allowed per game, but both of those would be flawed. Obviously measuring by plate appearance or inning could be better, and these metrics would still have some merit, but we can do better. Furthermore, how would you measure defense? Now that I've hammered down why we care about run values (but not runs specifically) so much, let's get into how I derived my run-values, at a high level. The run values for my offensive events were calculated from 4 distinct pieces:

**Per this addendum, Run Driving In Value is no longer used and its respective pieces have merged into Baserunner Effecting Value** I'll take a moment to describe each of these pieces at a high level.

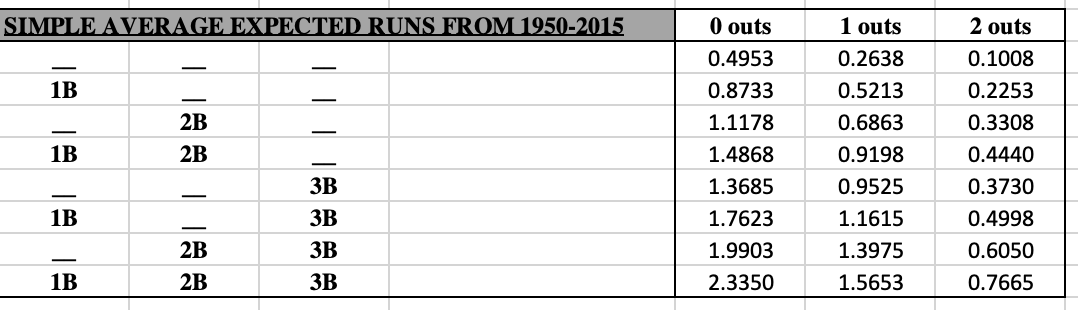

Now that I've explained these 4 pieces, here is a table that shows the run values by piece for each offensive event, as well as the total run value of the event: **View this addendum for the updated weights of each event** An 'other Out' is an out that is not a strikeout, a sac bunt, a sac fly, or a groundball double play. From a player's standard batting stats, this would be calculated as AB - H - SO - GIDP. An 'uBB' is an unintentional walk, calculated simply as BB - IBB. A 'non-HR hit' is the weighted average of a single, double, and triple. This had to be done because most standard pitching datasets do not include specific hit types against pitchers, only hits and home runs. This is the case for the Lahman package in R, which was the source I used when applying this metric on actual player data. Note that the value of an error is the difference between the value of an other Out and the value of a non-HR hit. This means that the value of an error is -.8326 runs. **Per this addendum, the value of an error is now -.6797 runs** For stolen bases and caught stealings, the Run Scoring Value section is the increase or decrease in the probability of scoring that you receive when either stealing a base or being thrown out. For pitchers, the applicable inverses of these values are used. So for pitchers, any of our hit types or walks are negative values, but any of our out types are positive values. Pitchers also get docked -.2622 runs for each wild pitch and -.245 runs for each balk. For fielding, putouts and assists are treated as 'other Outs', but again the inverse value. Unassisted putouts get the full value of the out, while assisted putouts for first basemen only get 20% of the out value. Assists only get 80% of the out value. These values are purely subjective, with the intuition that a first baseman that just needs to walk a step or two to the bag and then catch a ball thrown at his chest likely has it easier than the fielder that has to run to the ball, field it, and then make a throw to first. I initially was leaning towards a 75%/25% split, but I surveyed the r/Sabermetrics sub-Reddit and found that most of my peers think the split should either be 80/20 or 90/10. With that in mind, I settled on the 80/20 split. Obviously not all assisted putouts require little effort by first basemen, such as when they need to make a scoop play or stretch out far for the catch. Catcher putouts via strikeout only get 33% of the out value. This was also subjective; I figured catchers should get a little more credit since they also play a role in calling pitches and in making balls become strikes via framing. This means that an unassisted putout is worth .3137 runs, an assisted putout for first basemen is worth .3137*.2 = .06274 runs, and an assist is worth .3137*.8 = .25096 runs. I will also note that the # of unassisted putouts by first basemen or the # of catcher putouts that are via strikeouts is not widely available information. I looked at these trends overtime at a team level and generally found that about 90% of all putouts by first basemen are assisted, and that about 93% of all putouts by catchers are from strikeouts. I will note that this assumption is more stable over time for first basemen than it is for catchers, since strikeout rates have been increasing over time. Since for double plays fielders also get credited for the corresponding putouts and assists, double plays for fielding only get the additional value that a double play would bring. A double play means 2 outs, which at face would have a value of 2*.3137 = .6274 runs. However, we see above that a double play is actually worth .7529 runs, so for each fielder involved in a double play we credit them .7529-.6274 = .1255 runs. Catchers get docked -.2622 for each passed ball and -.1469 for each base stolen on them, but get credited .4242 runs for each runner that they throw out. So the fundamental idea of my metric is that we have all of these different traditional recorded baseball events that we've used to evaluate players for many years. We know that more homers are preferable than less, and that more strikeouts by batters is not preferred. What we haven't known is how all of these events compare to each other. What was more impressive, Roger Maris hitting 61 homers or Rickey Henderson stealing 130 bases? Well, 61 homers is worth 61*1.4508 = 88.4988 runs, whereas 130 stolen bases is only worth 130*.1469 = 19.097 runs. What was worse, Jim Rice grounding into 36 double plays or Mark Reynolds striking out 223 times? Well, 36*-.7529 = -27.1044 runs and 223*-.3362 = -74.9726 runs. So things like stealing bases and grounding into double plays can't make or break a season, but can certainly make some players more or less valuable. That's the bones of my metric; we see what players have done, and we are now aware of how relatively valuable those things are, so we can determine which players were the most valuable. The other big piece of my metric is the comparison. WAR of course compares players' values to a mathematically-backed-into 'replacement' level. My metric instead compares to the first quartile, or 25th percentile, value. This is the value that 75% of players are greater than. I believe that this comparison is more straightforward, easier to calculate, and more statistically sound. Comparing values to quartiles or percentiles is a very common practice across all areas of statistics. Comparing values to arbitrary baselines is much less common. Additionally, quartiles are nice because they work like the median in controlling skewed distributions and outliers much better than the mean (what you probably think of as 'average'). Also note that instead of comparing players across a league-wide average (mean) and then adjusting for replacement level (which is what WAR does), I compare players to the league first quartile at their position. So consider the case of rightfielders in 1921. Babe Ruth absolutely dominated his position, leading by a wide margin with 54 homers. The positional mean number of homers would be about 10. That would put only 4 players above average, but 10 players below average. Put another way, Babe would be 44 homers above the mean, and the worst guy (Nemo Leibold, who hit just 1 HR in 480 PA) would be 9 homers below the mean. If we use the median HR value of 6.5 instead, we'd have 7 guys above average and 7 guys below average. Babe would be 47.5 homers above the median and Nemo would be 5.5 homers below the median. The problem with using the mean is that it allows larger values to skew what is considered "average". The mean is much higher at 10 home runs solely because Babe's excellence drove it up. The median is lower because it is just the middle value; it doesn't care how many homers Babe hit. By comparing Babe and Nemo's home run counts to the mean and median, we can see the effect of using both. The mean is more so punishing Nemo, while the median is more so rewarding Babe. Since Babe was the one that performed so greatly, I think it's better to use the measure that rewards him. It also makes more sense because now we have the same # of guys above and below average. This is a common procedure in the statistical world; when a distribution is highly skewed, rely on the median as the measure of average rather than the mean. Since great players have the ability to skew distributions, it's better to use the median. And again, the first quartile works the same way as the median; instead of the middle value, it's the value that's a quarter of the way in. The first quartile is used for largely the same mathematical reason that replacement level is used in WAR. We need a value to compare players to, but we don't want to use an average because being average actually has value. If you have the 15th best catcher in the league, you shouldn't be eagerly looking to get rid of him; he's better than half of the other guys around! If we compare to average, it makes our actual average players (that have a full season of data) look the same as guys that hardly played. There is tremendous value in being able to play at a decent level for a full season. By comparing to a lower level, we reward the average players for playing and recognize their value over a player that only played in a handful of games. WAR makes up a player and quantifies the level that he plays at as 'replacement' and compares players to him. I compare players to their contemporaries, specifically the bottom 25%. What sounds better to you: we should replace our catcher because he's worse than this made up, mathematically defined replacement player, OR we should replace our catcher because he is one of the 7 to 8 worst catchers in the league? The comparison to positional values is done because different positions demand different inherent qualities. A second basemen that hits many homers is unique (Mac from It's Always Sunny in Philadelphia will be the first to tell you), provided that he can still adequately play second base. If we compare to league-wide average, this becomes less impressive as the league wide average HR value gets flooded with corner outfielders and infielders, where power is more expected. Here's a snip of how some of the first quartile offensive event counts varied by position in 2010: A second basemen that can adequately play the position on defense but hit 20 homers would look great compared to most second basemen, but not so much if we were to throw first basemen, right fielders, and designated hitters into the comparison. That's all there really is to it. Note that there are technically 3 ways that you could view my metric. One is to take the run-value weights and apply them to a player's absolute counts, ignoring the comparison to the positional first quartile. This doesn't help us measure players that may have played well, but only for a limited time such as due to injury, etc. Another way would be to apply the weights without comparison, but measure it on a rate basis. This would give us a value that is more like wOBA, batting average, ERA, or fielding percentage. We'd be able to tell which players are best when they play, but we wouldn't be rewarding players that play more. The final and preferred way is more comparable to WAR, whereby for each offensive and defensive event type, we see how many of that event a player recorded, we compare that value to his position's first quartile value, and then multiply that difference by the actual run value of the event. As a quick sneak peak of my next post where I'll go over the results of applying my metric to the 2010 season, if I rely on the absolute version, I get that the best batter was Miguel Cabrera. If I rely on the rate version, I get that the best batter was Gustavo Chacin, who hit a home run in his only plate appearance. The guy with the highest rate and a reasonable # of PAs for a season was Josh Hamilton. Hamilton was 2nd when using the absolute version; Cabrera only did more "good" offensive things because he had more plate appearances. Lastly, if I compare to positional first quartiles, I again see that Hamilton was the best batter. Cabrera actually comes in 3rd, with Carlos Gonzalez in 2nd because the quality of his batting was more valuable coming from an outfielder than Cabrera's was coming from a first basemen. I will acknowledge that I don't claim 4 decimal precision with these weights. To say that I definitively believe that a HR is worth 1.4508 would be a little absurd. Rather, when applying my metric and thus the weights on actual player data, I round the weights up to 2 decimal places. So when I was measuring Hamilton's and Cabrera's home runs in 2010, I weighted each one as being worth 1.45 runs. I won't go over the absolute version equations, since those are basically just the numerators of the rate equations. But below you can see the equations for each piece of Player Value, as well as the rate versions of the equations: **The weights used in these equations reflect the original methodology. Refer to this addendum for the updated weights to be used in the equations.** That is it for the overview. Feel free to skip the Details section and scroll to the end if you have any comments, or if you want to take a look at some of the files that show my work. If you want to see how the sausage was made, move on to the Details section below. Details As mentioned above, wOBA and the idea of a run expectancy matrix served as the initial inspirations for my metric. Recall that wOBA weights events based on their run value, as measured by the average change in run expectancy as a result of that event in a particular season. You can look at some of the run expectancy matrices that Tom Tango developed for 4 different periods here. My metric doesn't fluctuate each year or even across periods, so I created a simple average of these 4 matrices. You can view this simple average run expectancy matrix from 1950 to 2015 below: This table means that with nobody on and 0 outs, a team is expected to score .4953 runs that inning. If I were to hit a double and make the situation a man on 2nd with 0 outs, then my team is now expected to score 1.1178 runs that inning. That means I increased my team's expected runs that inning by 1.1178 - .4953 = 0.6625 runs, so my double is worth .6625 runs. However, not all doubles occur in this same situation, so the total change from all doubles in a season are added up and divided by the total number of doubles. This gives us the average change per double, which is the run value we'd use for doubles for a particular season. This process is repeated for each offensive event, each season. wOBA then shifts these values up by the value of an out so that an out becomes worth 0. This puts wOBA in a similar context of the normal metrics like batting average, on-base percentage, slugging, and OPS. Lastly, these values are divided by what is called the 'wOBA scale', which is the value that sets the league average wOBA equal to the league average on-base percentage. This means that wOBA in practice does not use run values for event types such as outs, even though Tango had computed them (here is an example using data from 1999 to 2001). You can compare Tango's values to mine and see that we aren't that far off. Besides the differences in how the run values are calculated, which I'll go into next, some other key differences between my metric and wOBA are: